These Fake AI “making-of” Videos that Fool People

Published by Joseph SARDIN, on

Summary

- Fake AI BTS videos mimic big-film production tropes

- The gear looks believable, but the details don’t add up

- Soon, spotting the fake will be much harder

- Visual “proof” collapses, and trust collapses with it

- The fix is provenance, not eyeballing

You’re scrolling. A video pops up: an “unreleased making-of” from a cult classic. Everything is there: the bustle of a set, the camera weaving through, focused crew members, confident gestures, and all the film gear that reassures you. You think: if you can see the cables, monitors, boom poles, harnesses, it has to be real. And that’s exactly where the trap snaps shut.

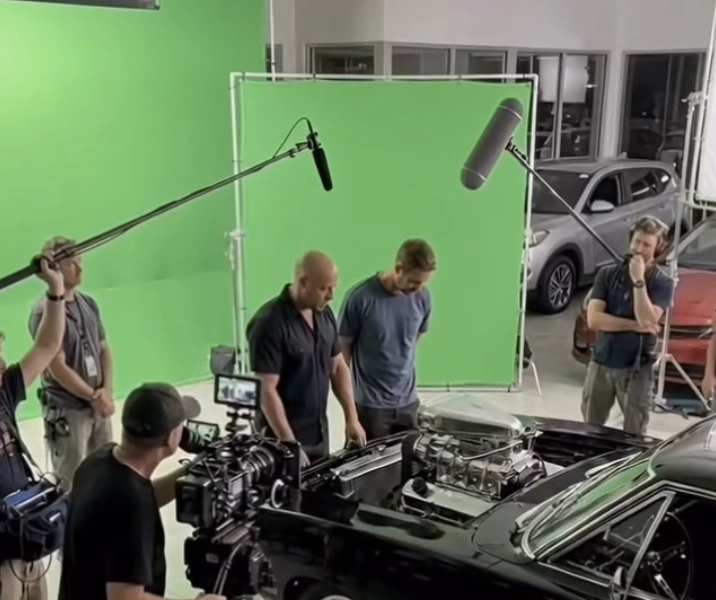

These AI-generated fake behind-the-scenes videos are multiplying, often tuned to look like on-the-ground footage. They borrow the aesthetics of the real thing: slightly blurry image, “stolen” framing, imperfect light, controlled background noise. Except under that patina, the AI is manufacturing a costume version of these jobs: it copies outward signs, very far from any working reality.

Gear as a disguise

The public recognizes a few totems: a cinema camera, a slate, a walkie, a headset, a boom pole. So the AI places them on-screen like evidence. But a film set isn’t a catalog of objects. It’s a chain of constraints.

On the image side, for example, equipment never exists in isolation: the camera implies a full system. There’s the logic of lenses and accessories, focus pulling, batteries, video links, the director’s monitor feed, sometimes an operator cart and an assistant cart, continuity markers, protections… In some fake BTS clips, you’ll see a “very cinema” camera held like a lightweight device, with none of the surrounding ecosystem that comes with it, monitors that don’t show the scene in question, gear that vanishes, things that float… It looks real if you don’t do the job, but it rings hollow to anyone who does.

Lighting and grip are the same: a fixture with no plausible power setup, flags placed “to look cool,” a crane with no room to move, safety missing where it would be mandatory, sometimes the reverse. You recognize the iconography, not the execution.

And on sound, without making it the center of the debate, you see the same mechanism: the boom pole becomes a set decoration, sometimes placed where it would be useless (in space or underwater), without the right gear (wireless packs, cables), without realistic handling (sometimes it’s literally floating), without the discreet ecosystem that supports the work. Yet sound, like image, isn’t an object. It’s a method.

Why it’s dangerous, even if it’s “just a BTS”

You could shrug: after all, they’re “just” viral videos. Except they hit something very sensitive and apply to everything: trust. For a long time, images served as a last resort. You could doubt a story, but “there’s a video” was enough to settle it. For the past few years, video can be fabricated so easily that this reflex is now obsolete.

The result is paradoxical: the general public, already far from the backstage reality, never had simple access to verifying information. But images played the role of a guardrail. When that guardrail disappears, everything becomes debatable. Real images get pushed to the same level as fake ones. Real professions too: if a fake BTS shows nonsense with confidence, it installs lasting confusion about what a shoot really is, what a gesture costs, what competence means, and why a crew exists.

In the end, you don’t just discredit one piece of information. You discredit the very idea that reliable information exists. And that’s a slippery slope: it feeds cynicism, scams, polarization, and the “you can’t believe anyone anymore” mindset.

When fakes become undetectable, the reflex has to change

If fakes soon become indistinguishable “to the naked eye,” the solution won’t be turning everyone into experts at hunting glitches. The right reflex is to move from detection to provenance: where does this content come from, who published it, with what track record, what transformations did it go through?

That’s the idea behind traceability initiatives like Content Credentials and the C2PA standard: attach verifiable information to the media about its origin and its journey, rather than hoping you can guess what’s real by gut feel. It doesn’t solve everything, but it restores a compass.

Until adoption becomes widespread, a few simple habits still help: favor official channels (studios, crews, clearly identified production accounts), look for corroboration (same images elsewhere, same dates, same people), and above all, accept a new rule: a video is no longer “proof by default.”

Your images: a brutally effective teaching tool

If you add screenshots of fake BTS clips to your article, you can turn them into a quick “gear reading” exercise: show one detail and ask the reader a question. Not to shame them, but to teach them how to look. This kind of concrete, hands-on pedagogy puts value back into the jobs: you understand that a set isn’t folklore. It’s rigor.

Ultimately, the point isn’t to save some nostalgia for “the real.” It’s to protect our collective ability to distinguish document from decoration. And in that fight, reminding people what technicians actually do, with their tools, constraints, and on-the-ground intelligence, is already an act of resistance.

What worries you more: the arrival of undetectable fakes, or the fact that nobody will trust real images anymore?

"Any news, information to share or writing talents? Contact me!"

♥ - Joseph SARDIN - Founder of BigSoundBank.com - About - Contact